Add `impl_tag!` macro to implement `Tag` for tagged pointer easily

r? `@Nilstrieb`

This should also lifts the need to think about safety from the callers (`impl_tag!` is robust (ish, see the macro issue)) and removes the possibility of making a "weird" `Tag` impl.

Encode hashes as bytes, not varint

In a few places, we store hashes as `u64` or `u128` and then apply `derive(Decodable, Encodable)` to the enclosing struct/enum. It is more efficient to encode hashes directly than try to apply some varint encoding. This PR adds two new types `Hash64` and `Hash128` which are produced by `StableHasher` and replace every use of storing a `u64` or `u128` that represents a hash.

Distribution of the byte lengths of leb128 encodings, from `x build --stage 2` with `incremental = true`

Before:

```

( 1) 373418203 (53.7%, 53.7%): 1

( 2) 196240113 (28.2%, 81.9%): 3

( 3) 108157958 (15.6%, 97.5%): 2

( 4) 17213120 ( 2.5%, 99.9%): 4

( 5) 223614 ( 0.0%,100.0%): 9

( 6) 216262 ( 0.0%,100.0%): 10

( 7) 15447 ( 0.0%,100.0%): 5

( 8) 3633 ( 0.0%,100.0%): 19

( 9) 3030 ( 0.0%,100.0%): 8

( 10) 1167 ( 0.0%,100.0%): 18

( 11) 1032 ( 0.0%,100.0%): 7

( 12) 1003 ( 0.0%,100.0%): 6

( 13) 10 ( 0.0%,100.0%): 16

( 14) 10 ( 0.0%,100.0%): 17

( 15) 5 ( 0.0%,100.0%): 12

( 16) 4 ( 0.0%,100.0%): 14

```

After:

```

( 1) 372939136 (53.7%, 53.7%): 1

( 2) 196240140 (28.3%, 82.0%): 3

( 3) 108014969 (15.6%, 97.5%): 2

( 4) 17192375 ( 2.5%,100.0%): 4

( 5) 435 ( 0.0%,100.0%): 5

( 6) 83 ( 0.0%,100.0%): 18

( 7) 79 ( 0.0%,100.0%): 10

( 8) 50 ( 0.0%,100.0%): 9

( 9) 6 ( 0.0%,100.0%): 19

```

The remaining 9 or 10 and 18 or 19 are `u64` and `u128` respectively that have the high bits set. As far as I can tell these are coming primarily from `SwitchTargets`.

Spelling compiler

This is per https://github.com/rust-lang/rust/pull/110392#issuecomment-1510193656

I'm going to delay performing a squash because I really don't expect people to be perfectly happy w/ my changes, I really am a human and I really do make mistakes.

r? Nilstrieb

I'm going to be flying this evening, but I should be able to squash / respond to reviews w/in a day or two.

I tried to be careful about dropping changes to `tests`, afaict only two files had changes that were likely related to the changes for a given commit (this is where not having eagerly squashed should have given me an advantage), but, that said, picking things apart can be error prone.

Rollup of 7 pull requests

Successful merges:

- #109981 (Set commit information environment variables when building tools)

- #110348 (Add list of supported disambiguators and suffixes for intra-doc links in the rustdoc book)

- #110409 (Don't use `serde_json` to serialize a simple JSON object)

- #110442 (Avoid including dry run steps in the build metrics)

- #110450 (rustdoc: Fix invalid handling of nested items with `--document-private-items`)

- #110461 (Use `Item::expect_*` and `ImplItem::expect_*` more)

- #110465 (Assure everyone that `has_type_flags` is fast)

Failed merges:

r? `@ghost`

`@rustbot` modify labels: rollup

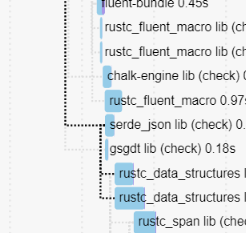

Don't use `serde_json` to serialize a simple JSON object

This avoids `rustc_data_structures` depending on `serde_json` which allows it to be compiled much earlier, unlocking most of rustc.

This used to not matter, but after #110407 we're not blocked on fluent anymore, which means that it's now a blocking edge.

This saves a few more seconds.

cc ````@Zoxc```` who added it recently

Implement StableHasher::write_u128 via write_u64

In https://github.com/rust-lang/rust/pull/110367#issuecomment-1510114777 the cachegrind diffs indicate that nearly all the regression is from this:

```

22,892,558 ???:<rustc_data_structures::sip128::SipHasher128>::slice_write_process_buffer

-9,502,262 ???:<rustc_data_structures::sip128::SipHasher128>::short_write_process_buffer::<8>

```

Which happens because the diff for that perf run swaps a `Hash::hash` of a `u64` to a `u128`. But `slice_write_process_buffer` is a `#[cold]` function, and is for handling hashes of arbitrary-length byte arrays.

Using the much more optimizer-friendly `u64` path twice to hash a `u128` provides a nice perf boost in some benchmarks.

Tagged pointers, now with strict provenance!

This is a big refactor of tagged pointers in rustc, with three main goals:

1. Porting the code to the strict provenance

2. Cleanup the code

3. Document the code (and safety invariants) better

This PR has grown quite a bit (almost a complete rewrite at this point...), so I'm not sure what's the best way to review this, but reviewing commit-by-commit should be fine.

r? `@Nilstrieb`

Remove some suspicious cast truncations

These truncations were added a long time ago, and as best I can tell without a perf justification. And with rust-lang/rust#110410 it has become perf-neutral to not truncate anymore. We worked hard for all these bits, let's use them.