mirror of

https://github.com/rust-lang/rust.git

synced 2025-05-14 02:49:40 +00:00

Auto merge of #97585 - lqd:const-alloc-intern, r=RalfJung

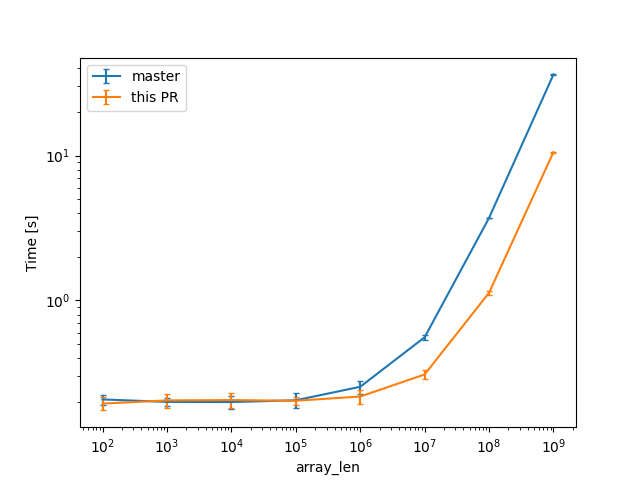

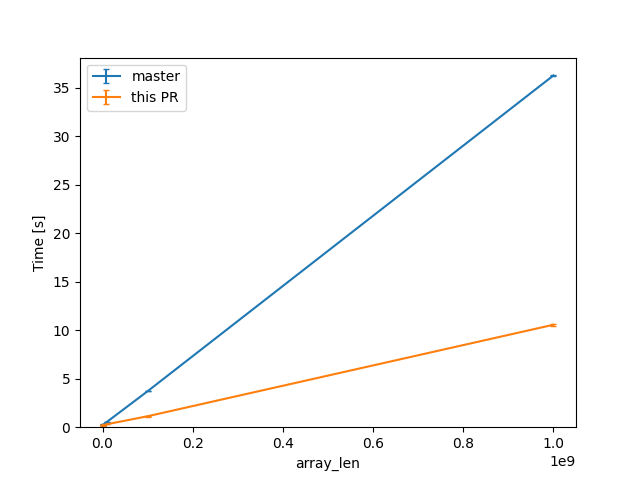

CTFE interning: don't walk allocations that don't need it The interning of const allocations visits the mplace looking for references to intern. Walking big aggregates like big static arrays can be costly, so we only do it if the allocation we're interning contains references or interior mutability. Walking ZSTs was avoided before, and this optimization is now applied to cases where there are no references/relocations either. --- While initially looking at this in the context of #93215, I've been testing with smaller allocations than the 16GB one in that issue, and with different init/uninit patterns (esp. via padding). In that example, by default, `eval_to_allocation_raw` is the heaviest query followed by `incr_comp_serialize_result_cache`. So I'll show numbers when incremental compilation is disabled, to focus on the const allocations themselves at 95% of the compilation time, at bigger array sizes on these minimal examples like `static ARRAY: [u64; LEN] = [0; LEN];`. That is a close construction to parts of the `ctfe-stress-test-5` benchmark, which has const allocations in the megabytes, while most crates usually have way smaller ones. This PR will have the most impact in these situations, as the walk during the interning starts to dominate the runtime. Unicode crates (some of which are present in our benchmarks) like `ucd`, `encoding_rs`, etc come to mind as having bigger than usual allocations as well, because of big tables of code points (in the hundreds of KB, so still an order of magnitude or 2 less than the stress test). In a check build, for a single static array shown above, from 100 to 10^9 u64s (for lengths in powers of ten), the constant factors are lowered: (log scales for easier comparisons)  (linear scale for absolute diff at higher Ns)  For one of the alternatives of that issue ```rust const ROWS: usize = 100_000; const COLS: usize = 10_000; static TWODARRAY: [[u128; COLS]; ROWS] = [[0; COLS]; ROWS]; ``` we can see a similar reduction of around 3x (from 38s to 12s or so). For the same size, the slowest case IIRC is when there are uninitialized bytes e.g. via padding ```rust const ROWS: usize = 100_000; const COLS: usize = 10_000; static TWODARRAY: [[(u64, u8); COLS]; ROWS] = [[(0, 0); COLS]; ROWS]; ``` then interning/walking does not dominate anymore (but means there is likely still some interesting work left to do here). Compile times in this case rise up quite a bit, and avoiding interning walks has less impact: around 23%, from 730s on master to 568s with this PR.

This commit is contained in:

commit

750d6f8545

@ -168,8 +168,51 @@ impl<'rt, 'mir, 'tcx: 'mir, M: CompileTimeMachine<'mir, 'tcx, const_eval::Memory

|

||||

mplace: &MPlaceTy<'tcx>,

|

||||

fields: impl Iterator<Item = InterpResult<'tcx, Self::V>>,

|

||||

) -> InterpResult<'tcx> {

|

||||

// ZSTs cannot contain pointers, so we can skip them.

|

||||

if mplace.layout.is_zst() {

|

||||

// We want to walk the aggregate to look for references to intern. While doing that we

|

||||

// also need to take special care of interior mutability.

|

||||

//

|

||||

// As an optimization, however, if the allocation does not contain any references: we don't

|

||||

// need to do the walk. It can be costly for big arrays for example (e.g. issue #93215).

|

||||

let is_walk_needed = |mplace: &MPlaceTy<'tcx>| -> InterpResult<'tcx, bool> {

|

||||

// ZSTs cannot contain pointers, we can avoid the interning walk.

|

||||

if mplace.layout.is_zst() {

|

||||

return Ok(false);

|

||||

}

|

||||

|

||||

// Now, check whether this allocation could contain references.

|

||||

//

|

||||

// Note, this check may sometimes not be cheap, so we only do it when the walk we'd like

|

||||

// to avoid could be expensive: on the potentially larger types, arrays and slices,

|

||||

// rather than on all aggregates unconditionally.

|

||||

if matches!(mplace.layout.ty.kind(), ty::Array(..) | ty::Slice(..)) {

|

||||

let Some((size, align)) = self.ecx.size_and_align_of_mplace(&mplace)? else {

|

||||

// We do the walk if we can't determine the size of the mplace: we may be

|

||||

// dealing with extern types here in the future.

|

||||

return Ok(true);

|

||||

};

|

||||

|

||||

// If there are no relocations in this allocation, it does not contain references

|

||||

// that point to another allocation, and we can avoid the interning walk.

|

||||

if let Some(alloc) = self.ecx.get_ptr_alloc(mplace.ptr, size, align)? {

|

||||

if !alloc.has_relocations() {

|

||||

return Ok(false);

|

||||

}

|

||||

} else {

|

||||

// We're encountering a ZST here, and can avoid the walk as well.

|

||||

return Ok(false);

|

||||

}

|

||||

}

|

||||

|

||||

// In the general case, we do the walk.

|

||||

Ok(true)

|

||||

};

|

||||

|

||||

// If this allocation contains no references to intern, we avoid the potentially costly

|

||||

// walk.

|

||||

//

|

||||

// We can do this before the checks for interior mutability below, because only references

|

||||

// are relevant in that situation, and we're checking if there are any here.

|

||||

if !is_walk_needed(mplace)? {

|

||||

return Ok(());

|

||||

}

|

||||

|

||||

|

||||

@ -942,6 +942,11 @@ impl<'tcx, 'a, Tag: Provenance, Extra> AllocRef<'a, 'tcx, Tag, Extra> {

|

||||

.check_bytes(&self.tcx, self.range.subrange(range), allow_uninit, allow_ptr)

|

||||

.map_err(|e| e.to_interp_error(self.alloc_id))?)

|

||||

}

|

||||

|

||||

/// Returns whether the allocation has relocations for the entire range of the `AllocRef`.

|

||||

pub(crate) fn has_relocations(&self) -> bool {

|

||||

self.alloc.has_relocations(&self.tcx, self.range)

|

||||

}

|

||||

}

|

||||

|

||||

impl<'mir, 'tcx: 'mir, M: Machine<'mir, 'tcx>> InterpCx<'mir, 'tcx, M> {

|

||||

|

||||

@ -537,21 +537,26 @@ impl<Tag: Provenance, Extra> Allocation<Tag, Extra> {

|

||||

/// Relocations.

|

||||

impl<Tag: Copy, Extra> Allocation<Tag, Extra> {

|

||||

/// Returns all relocations overlapping with the given pointer-offset pair.

|

||||

pub fn get_relocations(&self, cx: &impl HasDataLayout, range: AllocRange) -> &[(Size, Tag)] {

|

||||

fn get_relocations(&self, cx: &impl HasDataLayout, range: AllocRange) -> &[(Size, Tag)] {

|

||||

// We have to go back `pointer_size - 1` bytes, as that one would still overlap with

|

||||

// the beginning of this range.

|

||||

let start = range.start.bytes().saturating_sub(cx.data_layout().pointer_size.bytes() - 1);

|

||||

self.relocations.range(Size::from_bytes(start)..range.end())

|

||||

}

|

||||

|

||||

/// Returns whether this allocation has relocations overlapping with the given range.

|

||||

///

|

||||

/// Note: this function exists to allow `get_relocations` to be private, in order to somewhat

|

||||

/// limit access to relocations outside of the `Allocation` abstraction.

|

||||

///

|

||||

pub fn has_relocations(&self, cx: &impl HasDataLayout, range: AllocRange) -> bool {

|

||||

!self.get_relocations(cx, range).is_empty()

|

||||

}

|

||||

|

||||

/// Checks that there are no relocations overlapping with the given range.

|

||||

#[inline(always)]

|

||||

fn check_relocations(&self, cx: &impl HasDataLayout, range: AllocRange) -> AllocResult {

|

||||

if self.get_relocations(cx, range).is_empty() {

|

||||

Ok(())

|

||||

} else {

|

||||

Err(AllocError::ReadPointerAsBytes)

|

||||

}

|

||||

if self.has_relocations(cx, range) { Err(AllocError::ReadPointerAsBytes) } else { Ok(()) }

|

||||

}

|

||||

|

||||

/// Removes all relocations inside the given range.

|

||||

|

||||

Loading…

Reference in New Issue

Block a user